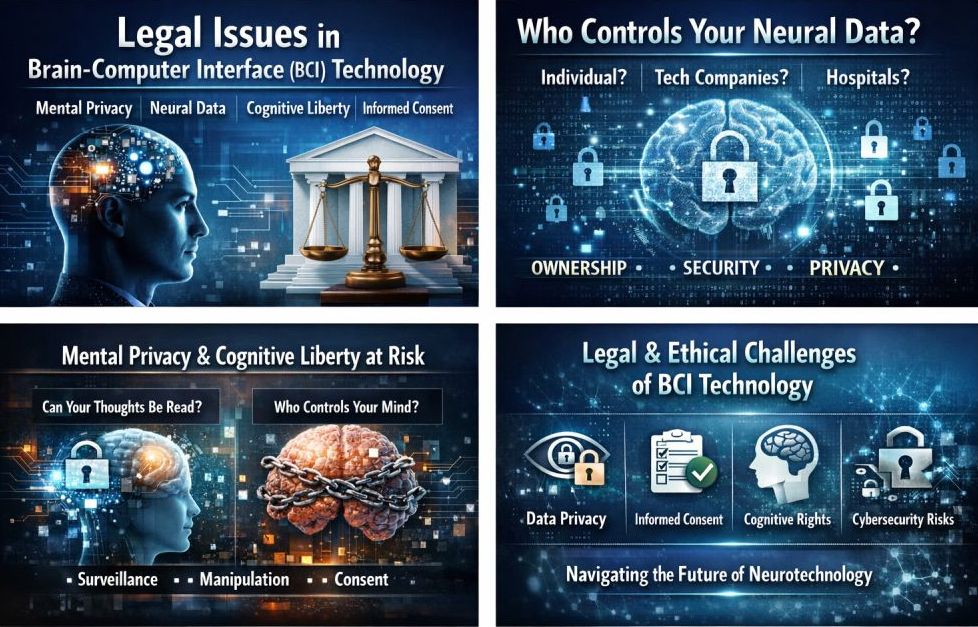

Brain-Computer Interface (BCI) technology is no longer science fiction. What once felt like a futuristic dream—connecting the human brain directly to machines—is becoming a reality. Companies like Neuralink, research institutions, and hospitals around the world are developing BCI systems that can help paralysed patients move robotic arms, restore communication for people with ALS, and even treat depression.

As this technology grows, so do legal issues. Questions about mental privacy, neural data ownership, cognitive liberty, and consent are urgent. If we ignore them, we may lose our right to keep our thoughts private.

This blog explains the main legal challenges of BCI technology in clear, well-supported language. It helps policymakers, tech developers, healthcare professionals, legal experts, and curious readers understand the risks and solutions.

What Is Brain-Computer Interface (BCI) Technology?

A Brain-Computer Interface (BCI) is a system that connects the human brain directly to a computer or device. It works by recording brain signals, translating them into digital commands, and sending them to external devices.

Some BCIs are invasive (implanted in the brain), while others are non-invasive (using EEG headsets). Today, BCIs are being tested for:

- Restoring movement in paralysed patients

- Treating neurological disorders

- Enhancing communication

- Improving rehabilitation

- Potential future cognitive enhancement

While the medical benefits are promising, recognising the significant legal risks is equally important as we consider the broader implications.

Why Legal Issues in BCI Technology Matter Now

We are at a turning point. The law often reacts slowly to new technology, but BCI technology moves fast.

Unlike smartphones or wearables, BCIs interact with the human mind itself. They interpret brain-generated neural data, which is far more sensitive than location or browsing data.

If mishandled, neural data could reveal:

- Emotional states

- Intentions

- Preferences

- Cognitive weaknesses

- Health conditions

Given these sensitivities, it becomes clear that traditional data protection laws may not be enough.

Mental Privacy: The Right to Keep Your Thoughts Private

One of the biggest legal concerns in Brain-Computer Interface technology is mental privacy.

Mental privacy means your thoughts, intentions, and inner experiences should remain private unless you choose to share them.

In traditional life, no one can access your thoughts directly. But with advanced BCIs, it may become possible to decode patterns that suggest what a person is thinking or intending.

Researchers and legal scholars, including experts from institutions like Columbia University, have argued that mental privacy should be recognised as a fundamental human right in the digital age.

A Real Concern

A few years ago, I attended a neurotechnology conference where a researcher demonstrated that machine learning models could predict whether a participant recognised certain images solely from brain signals. It was fascinating—but also deeply unsettling.

If recognition can be detected, what about attraction? Political preference? Emotional reactions?

This moment made me realise the law must move faster than technology.

Alongside mental privacy, another major legal question emerges: Who owns your brain signals?

Another major legal issue related to BCI technology is clarifying who owns neural data extracted from the user’s brain.

When you use social media, your data is collected and often monetised. But brain data is not like browsing data. Neural data is:

- Highly sensitive

- Deeply personal

- Difficult to anonymise

- Potentially permanent

The core question remains: Who legally owns neural data collected by BCI devices, and how is this determined?

Is ownership assigned to the patient who generates the data, the device manufacturer that makes the BCI, the hospital overseeing treatment, or the software provider that processes the signals?

Under some regulations, biometric and health data are protected. Neural data may need even stronger protection.

Some experts suggest neural data should be treated as a biological property, similar to organs or DNA. Others argue it should be classified under a new legal category: “neurorights.”

Countries like Chile have already amended their constitution to protect brain data and cognitive liberty. This is significant.

Cognitive Liberty: The Freedom to Think Freely

Cognitive liberty is the right to control your own mental processes.

This includes:

- The right to use BCI technology

- The right to refuse BCI technology

- The right not to have your thoughts altered without consent

As BCIs become more advanced, they may not only read brain signals but also stimulate the brain. Deep-brain stimulation is already used for Parkinson’s disease.

But what happens if cognitive enhancement becomes commercial?

Imagine:

- Employers are encouraging workers to use BCIs to improve productivity

- Schools are pushing students to adopt memory-enhancing implants

- Insurance companies offering discounts for “optimised cognition”

Without legal safeguards, cognitive liberty could be quietly eroded.

Organisations like the World Economic Forum have begun discussing ethical frameworks for neurotechnology. Discussion is not enough. We need enforceable laws.

Consent in Brain-Computer Interface Technology

Informed consent is a basic medical ethic. BCI technology complicates consent in several ways.

1. Complexity of Technology

Many users may not fully understand how their neural data is processed or stored.

2. Long-Term Effects

BCI implants could affect personality, mood, or identity over time.

3. Data Reuse

Neural data might be reused for AI training or future research.

True informed consent must include:

- Clear explanation of risks

- Data usage transparency

- Easy withdrawal options

- Long-term monitoring

In my experience reviewing digital health policies, I have seen consent forms filled with complex legal language. Most people click “agree” without understanding what they’re agreeing to.

When your data is brain data, this casual model of consent is dangerous.

Beyond consent, security risks raise urgent questions: Is hacking the human mind possible?

Cybersecurity is another serious legal issue in BCI technology.

If brain-connected devices are hacked, attackers could access neural data, disrupt motor functions, or manipulate signals.

This issue goes beyond privacy—it’s a matter of your safety.

BCI systems must meet strict cybersecurity standards. Regulators should treat them like critical medical infrastructure.

The Need for New “Neurorights” Laws

Legal scholars and neuroscientists are calling for new rights known as neurorights, which may include:

- Right to mental privacy

- Right to cognitive liberty

- Right to mental integrity

- Right to psychological continuity

Countries and institutions are debating these protections. Global coordination is still limited.

Without harmonised laws, companies could operate in countries with weaker regulations. This creates ethical loopholes.

Balancing Innovation and Regulation

We must avoid two extremes:

- Over-regulation that slows life-saving innovation

- Under-regulation that harms human autonomy

BCI technology has the power to:

- Restore movement

- Treat mental illness

- Improve quality of life

Yet this technology could also fundamentally change society.

Having followed emerging tech law for years, I believe the solution lies in proactive regulation. Lawmakers must work with neuroscientists, ethicists, cybersecurity experts, and patient communities.

Practical Recommendations for Stakeholders

For Policymakers:

- Define neural data clearly in law

- Establish strict consent standards

- Recognise mental privacy as a protected right

For Companies:

- Build privacy by design

- Avoid monetising neural data

- Be transparent about algorithms

For Healthcare Providers:

- Educate patients clearly

- Monitor psychological effects

- Provide ongoing support

For Users:

- Ask how your neural data is stored

- Understand your withdrawal rights

- Choose reputable providers

Final Thoughts: Protecting the Last Frontier of Privacy

The human brain is the last frontier of privacy.

Legal issues in BCI technology are not theoretical; they are urgent. Mental privacy, neural data protection, cognitive liberty, and consent must be priorities in neurotech regulation.

If we act now, we can ensure that BCI technology heals and empowers us without risking our dignity.

If we delay, we may turn our most personal space—the mind—into just another data stream.

The question is not whether BCI technology will shape the future.

The real question is whether the law will protect us as BCI technology shapes the future.