AI-Driven Deepfake Extortion & Identity Hijacking: How AI Scams Steal Faces, Voices & Trust

A few years ago, scams were easy to recognise. Bad grammar, suspicious emails, and unknown callers gave clear clues.

Today, those clues are disappearing.

Artificial Intelligence is now advanced. Criminals no longer need to steal passwords or hack systems. Instead, they recreate people: faces, voices, emotions, and authority.

AI-driven deepfake extortion and identity hijacking is a new and dangerous cybercrime. Its real danger is how convincingly it manipulates human trust.

Let’s examine how these attacks work, why they spread so rapidly, and how people and organisations can proactively protect themselves before becoming victims.

Before diving into the methods and impact, it’s crucial to define what a deepfake is.

A deepfake is media created using artificial intelligence that makes someone appear to say or do something they never actually did.

With modern AI tools, a criminal can:

- Clone a person’s voice from short audio clips

- Animate photos into realistic videos

- Replace faces in live video calls

- Generate completely fake identities

The scary part? The technology no longer looks fake. Even experts often need software to detect manipulation.

If you’ve uploaded videos, voice messages, or interviews online, you’ve unknowingly provided raw material that can be misused.

The Shift From Hacking Systems to Hacking Humans

Traditional cybercrime targeted machines. Deepfake crime targets psychology.

Instead of breaking into servers, attackers now create believable situations. They pressure victims to act quickly: sending money, sharing data, or granting access.

I remember reading about a case discussed among cybersecurity professionals in which an employee joined what appeared to be a routine video meeting with company leadership. The faces were familiar. The voices matched perfectly. Instructions sounded urgent but normal.

Only later did investigators realise every participant except the employee was AI-generated.

Millions were transferred before anyone questioned it.

That moment marked a shift: fraud had entered the era of synthetic reality.

How Criminals Use Hyper-Realistic Deepfakes

1. Deepfake Blackmail and Digital Extortion

One of the cruellest uses of AI involves fake compromising videos.

Criminals use public photos to create explicit or embarrassing fake content. They threaten victims with distribution unless payment is made.

Victims panic because the content looks real enough to destroy reputations, careers, or relationships.

What makes this especially harmful is emotional pressure. People often pay out of fear, even when the material is fake.

The damage isn’t just financial; it’s psychological. Victims report anxiety, shame, and long-term stress even after learning the video was fake.

2. CEO and Executive Impersonation

Businesses are now prime targets.

Attackers study company structures through LinkedIn and interviews. They clone executive voices or appearances to issue urgent financial instructions.

Imagine a video call from your CEO requesting an immediate transfer to secure a deal. The face looks right. The tone sounds familiar. The urgency feels real.

Employees obey authority — and criminals know this.

These attacks combine AI with classic social engineering, making them more convincing than phishing emails.

3. Political and Public Figure Impersonation

Deepfakes are also being used to imitate politicians and public personalities.

Fake speeches, announcements, and endorsements spread quickly online. Verification is often slow. Even when proven false, misinformation travels farther than corrections.

This creates a dangerous environment where people begin to doubt even real evidence.

4. Family Emergency Voice Scams

Perhaps the most emotionally disturbing cases involve families.

Scammers clone a child’s or a relative’s voice and call parents claiming they are in danger or need urgent money.

The voice sounds terrified. Background noise adds realism. Panic takes over before logic can intervene.

Several cybersecurity investigators have said these scams succeed because victims respond emotionally, not rationally — exactly what attackers intend.

5. Romance and Relationship Manipulation

AI face-swapping tools now allow scammers to appear as entirely different people during video chats.

Relationships are built over weeks or months. Trust grows. Then emergencies appear: medical bills, travel problems, investment opportunities.

By the time victims realise something is wrong, emotional attachment clouds judgment.

Why Deepfake Scams Work So Well

Deepfake attacks succeed because they exploit human behaviour, not technical weakness.

Three psychological triggers appear again and again:

- Authority – People trust leaders and officials without hesitation.

- Urgency – Pressure reduces critical thinking.

- Familiarity – Recognisable faces instantly lower suspicion.

Studies show most people struggle to identify deepfake videos, especially when emotions are involved.

In simple terms, our brains are not trained for synthetic reality.

Identity Hijacking: A New Kind of Digital Theft

Identity theft used to mean stolen passwords or documents.

Now, criminals recreate entire personalities.

They gather information from:

- Social media profiles

- Public videos and interviews

- Voice recordings

- Online behaviour patterns

Using AI, they build a digital version of someone who can interact with others convincingly.

In one troubling case shared within cybersecurity communities, investigators found job applicants using AI-altered faces in remote interviews to hide their real identities.

That realisation changed how experts think about online trust.

Identity is no longer fixed; it can be manufactured.

Why This Threat Is Growing Rapidly

Several factors are accelerating deepfake crime:

- Easy Access to AI Tools

Technology, once limited to researchers, is now available through simple apps.

- Massive Digital Footprints

Every post, reel, or podcast adds data for attackers to use.

- Remote Communication Culture

Video calls are now normal. This makes digital impersonation easier.

Criminal groups adapt faster than regulations and awareness campaigns.

Practical Ways to Protect Yourself

For Individuals

- Pause before reacting emotionally.

- Urgency is usually a manipulation tactic.

- Verify through another channel.

- Call back using a known number. Do not reply directly to suspicious messages.

- Create family verification questions.

- A simple private phrase can stop scams that use voice clones.

- Limit public sharing of sensitive personal content.

- Secure accounts with multi-factor authentication.

For Businesses

- Never approve payments based only on video or voice instructions.

- Train employees regularly about deepfake risks.

- Businesses should implement verification workflows for all financial requests.

- Encourage a culture where employees can question authority safely.

- Empowering employees to double-check leadership requests may become one of the strongest security measures.

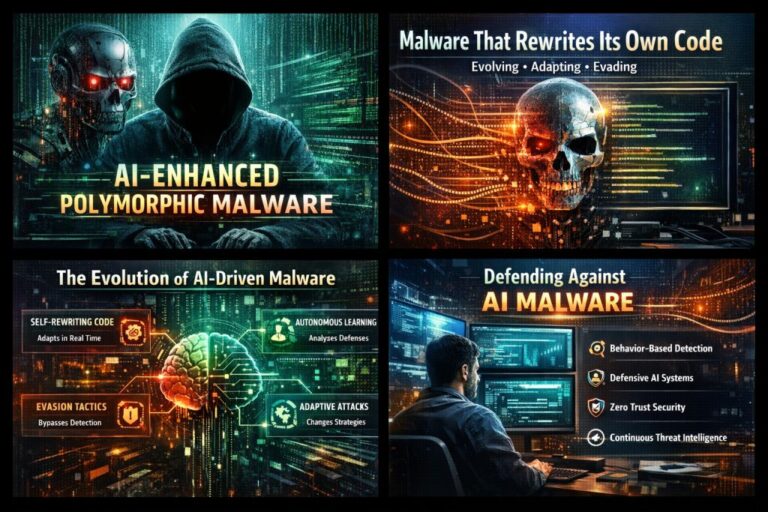

Fighting AI With AI

The cybersecurity industry is now developing detection tools that analyse subtle inconsistencies invisible to humans, such as facial micro-movements, audio patterns, and digital artefacts.

Technology alone won’t solve the problem.

Awareness remains the strongest defence.

The Real Cost: Loss of Trust

The biggest danger of deepfakes may not be financial loss.

It’s uncertainty.

When people can no longer trust digital evidence or conversations, society enters unfamiliar territory. Journalism, business, and relationships all rely on authenticity.

Deepfakes challenge that foundation.

Final Thoughts

AI itself is not the enemy. Like any powerful tool, it reflects how humans choose to use it.

But one truth is becoming clear:

- Seeing is no longer believing. Hearing is no longer proof.

Digital literacy will soon matter as much as cybersecurity software. Verification will become a daily habit, not just a technical process.

Do not respond with fear. Actively seek information, question suspicious interactions, and share your knowledge. Make digital awareness a daily practice for yourself and your community.

To protect yourself and your organisation: understand the threat of deepfakes, recognise manipulation tactics, pause and verify before acting, reduce public exposure of personal information, and foster a culture of questioning and verification. Finally, remember that digital trust is now a critical asset to safeguard every day.